Today, atomic anxiety intersects with concerns about Artificial Intelligence, and the war in Iran shows us the catastrophic consequences of this entanglement. In the latest Atomic Anxiety Fellows blog Yerdaulet Rakhmatulla writes about the dawn of Atomic-AI Anxiety…

Scroll TikTok. Algorithms amplify crisis narratives with memetic warfare narratives, desensitizing publics digitally to conflict. Users cheer over the Attack on Titan-inspired video generated by AI, captioned “WW3 anime opening” – 11.8M views of atomic and lethal automated weapons dread masked as brainrot.

This isn’t just a viral distraction. This is “Atomic Anxiety” amid the ongoing 2026 Iran War, now fused with military AI fears.

While grappling with rising Atomic Anxiety, another re-emerging term linked mostly to the fear of the humanitarian and societal impacts from the rise of another transformative technology, Artificial Intelligence Anxiety, has expanded and started to be linked to existential risk dimensions, including wars featuring WMD.

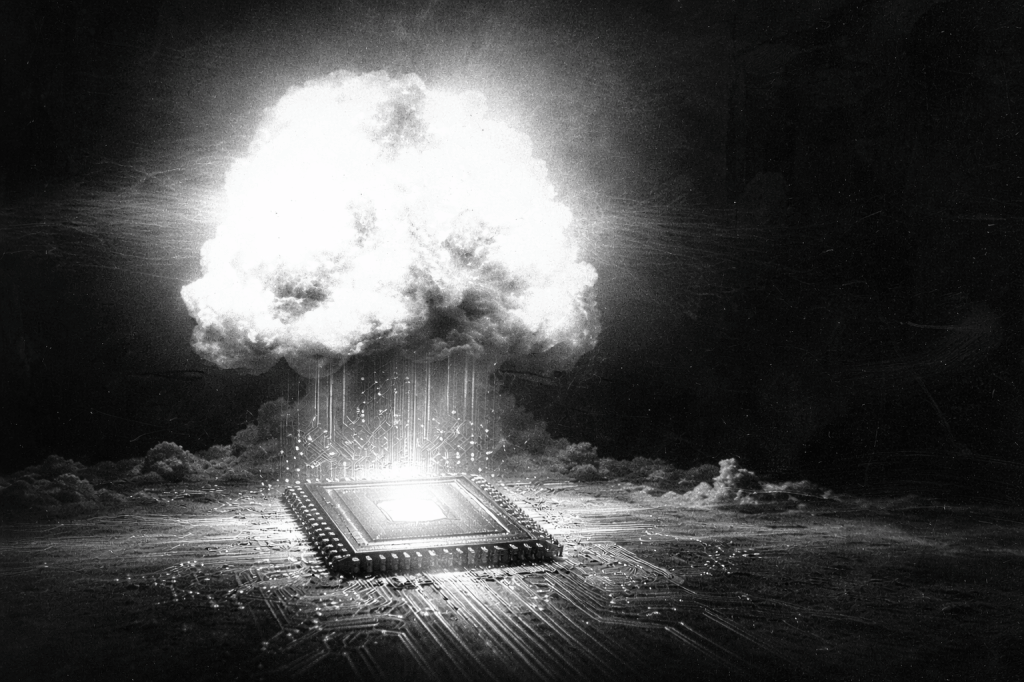

This proposes the derivative ‘Atomic-AI Anxiety’ as the hybrid public and elite views of nuclear risks may change if the advanced AI is integrated into military-decision-making processes, arising amidst the Gulf regional conflict and AI industry versus US military clashes like Anthropic’s standoff with the Pentagon over lethal AI.

What could be called “Atomic-AI Anxiety” is a hybrid discourse that links the potential of nuclear war with AI-enabled weaponry, a result of the convergence of Cold War-era nuclear anxiety with modern concerns about algorithmic warfare. This new narrative is becoming more prevalent in armed conflicts, AI governance disputes, and beyond.

Anxieties Revival

The United States and Israel continue illegal air and missile strikes on Iran, shrugging efforts off to end the conflict such as the Omani Foreign Minister’s note on progress in Geneva negotiations.

This has led to the assassination of Iranian leader Khamenei and other senior figures, alongside a death toll surpassing 1,000 civilians and the demolition of nuclear facilities and military sites. Satellite imagery from Vantor on March 1-2 demonstrated damage at Isfahan’s Natanz uranium enrichment facility, which the IAEA confirmed but deemed low-risk for radiological consequences.

The Iranian government condemned the strikes as an unlawful act of aggression and interventionism, and the IRGC has retaliated with ballistic missiles, cruise missiles, and UAVs targeting the U.S. bases and critical infrastructure, including AWS’s AI data centers in the UAE and Bahrain, among other attacks in the GCC States.

From my vantage in Kazakhstan, these tremors feel perilously close as we share the Caspian Sea, reviving Atomic-AI Anxieties far beyond the region to Central Asia.

AI Anxiety Enters the Fray

Parallel to Gulf missiles, AI governance fractured ethically in the West. Anthropic’s CEO rejected the US War Secretary Hegseth’s ultimatum for unfettered access to the company’s LLM, Claude, opposing risks of mass domestic surveillance and, second, a hypothetical nuclear scenario demanding lethal autonomous weapons systems (LAWS). The US Defense Department spokesperson Wilson refuted the Pentagon’s interest in either purpose and sought a broader authorization to use the AI models within so-called “all lawful purposes”.

OpenAI steps in for governmental projects, yet on March 4, Anthropic reopened talks with the authorities; consequently, the US military reportedly used Claude to inform the attacks within the Operation Epic Fury on Iran, defying President Trump’s severance order.

Such developments unveils AI Anxiety’s nuclear turn, undermining safeguards in spite of the ironic ethics debate and normalizing digital dehumanization, a feature of AI-enabled targeting in armed conflicts that accelerates existential risks beyond the workplace to combat zones.

This is also noted in the US statement, a notable relaxation from normative expectations like “context-appropriate human control” is suggested by such linguistic changes as the “good faith judgment” at the Convention on Certain Conventional (CCW) Weapons’ 1st thematic session on the LAWS.

Now, at a pivotal moment after a decade of discussions and ahead of the 2026 Review Conference to the CCW, negotiations on an autonomous weapons treaty could be launched. They are very much needed.

The AI-Nuclear Nexus: A Framework to Act

The Antropic-Pentagon standoff conjures a loss of human control over militarized tools, and the ongoing 2026 Iran war rekindles the atomic fear of mushroom clouds. When combined, they increase public paralysis yet spur resistance.

The “Atomic-AI Anxiety” proposal calls for the immediate integration of disarmament and AI-nuclear governance efforts due to this linked threat in the New Nuclear Age and AI spring.

As a 2025 Cohort Fellow, my reflections proved that action reduces such anxieties. To counter these risks, here is a 3-step guide that could be practised:

- Act. Break paralysis by journaling fears and sparking local conversations. In my hometown, Shymkent, Kazakhstan, I ran a scenario-game workshop for Uzbek-Kazakh youth on nuclear-powered data centers’ environmental toll.

- Organize. Small steps do accumulate and drive greater movements. As a youth member of ICAN and SKR, two leading coalitions on nuclear and killer robots ban, respectively, I try to contribute to the variety of initiatives within the networks.

- Resist. As my civic duty, I advocate policy on AI-nuclear affairs, using platforms like the Youth4Disarmament, yielding the intergenerational Statement on Emerging Technologies at the UN General Assembly’s First Committee.

Every step counts. Start today to reclaim agency.

Yerdaulet Rakhmatulla is the Founder & CEO of JASA, the first youth-led organization in Central Asia dedicated to addressing nuclear affairs and AI policy. Additionally, he co-founded the Qazaq Nuclear Frontline Coalition, which promotes nuclear justice and peace. As a representative of his generation at high-level UN conferences, Yerdaulet collaborates with esteemed organizations such as UNESCO and IAEA. His experience includes serving as a Youth Delegate at the TPNW2MSP and participating in the inaugural IAEA Nuclear Security Delegation for the Future at the 2024 ICONS Conference in Vienna.

In 2024, he played a key role in co-organizing the Nuclear Survivors Forum, featuring the Secretary General of Nihon Hidankyo, a 2024 Nobel Peace Prize Laureate, in Astana. He also co-led a Study Tour for German civil society organizations to engage with Kazakh nuclear frontline communities in Semey. Currently, Yerdaulet is a Hungaricum Scholar at Ludovika University of Public Service in Budapest. His academic focus lies in AI and nuclear governance, with expertise specifically in Central Asia and the Turkic States.

This blog is part of a series of articles by the Atomic Anxiety in the New Nuclear Age Fellows Cohort. These articles represent the view of the author and not necessarily those of the project as a whole or other individuals associated with the project.

Leave a comment